How Viral AI Startup Fraud Involving 'Delve' is Tip of Iceberg

'Delve' fraud is latest example of how AI limitations are not generally understood by young founders in San Francisco with high valuations and naive questions

What Happened?

This past Friday, a viral blog post exposed (through extreme details) the AI compliance creation startup Delve (at a $300M valuation) of fraudulently enabling security compliance certifications for over 400 startups - exemplifying the currently rampant overpromises made of young AI startup founders that do not appear to have any understanding of AI limitations, nor an understanding of the mechanics of the underlying business industry they’ve been applying it to. To visualize the scope this typifies, there are over 2,000 startups in SF alone, collectively valued at hundreds of billions of dollars with funds seeded by various banks through private equity.

Wait, what is Delve?

To summarize the very detailed post I’d referred to above (with many granular pieces of evidence), Delve is a startup that pre-generated templates that confirmed security and health data compliance certification before validation, then enabled shell companies based in India to rubber stamp the results in order to effectively meet their marketing promise of ‘certification in days not months’. The templates were found to contain the same language for each company’s very different tech stacks after one was accidentally shared by an employee in a slack channel.

While also ironic that ‘delve’ is a common word that overindexes in LLM training data, and as such has influenced language outside of LLMs and has since become (even more ironically) common in vernacular, this issue is pertaining to the AI startup fraud the word could now be associated with going forward.

Which customers are most effected?

Also ironically, other AI startups. Delve has been part of Y Combinator, a formerly respected organization whose startups have recently been known to be customers of each other, contributing to a ponzi-like revenue falsification that (as a separate issue) that will at some point be a means to an end. The issue now implies a large majority of AI startups at Y Combinator have bogus certifications in compliance, leading to not only customer data risk but also another nail in the coffin of high valued startups that have been misleading investors who misunderstand limitations of AI.

What makes these founders so brazen?

The founders are part of Forbes 30 under 30 list in 2026, and lots of marketing for Delve emphasized they were MIT dropouts over the advantages of the service itself.

What amplifies this as a train wreck?

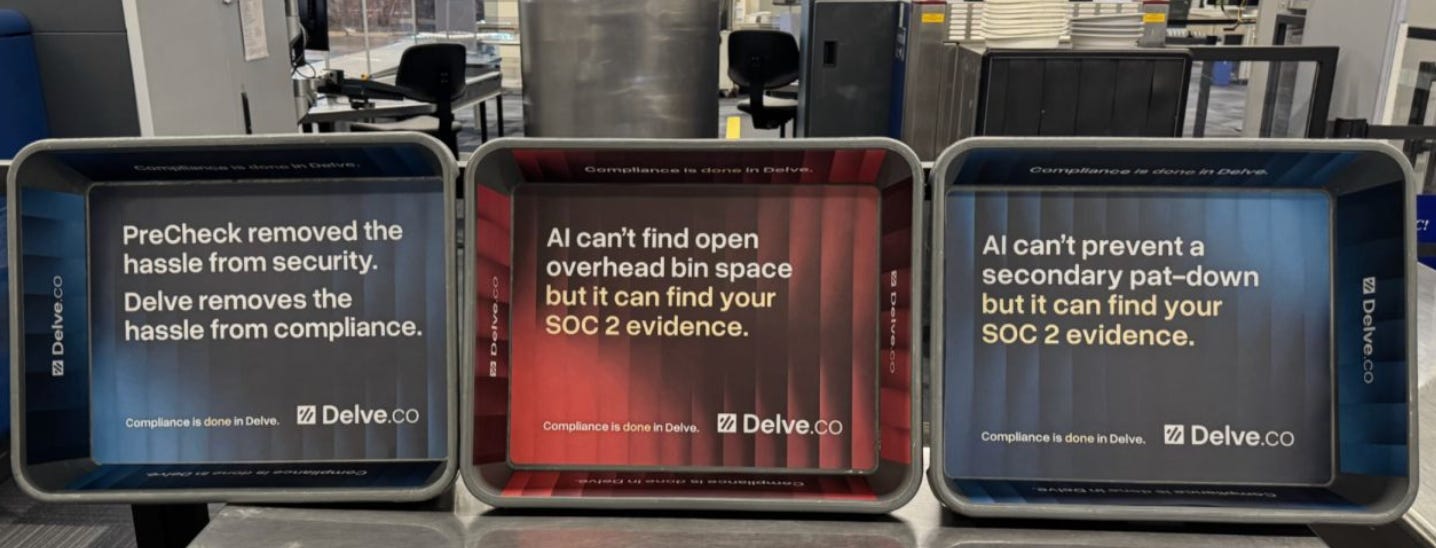

Comedically, Delve had very recently increased the marketing to brand every TSA tray at Silicon Valley’s San Jose airport this past month.

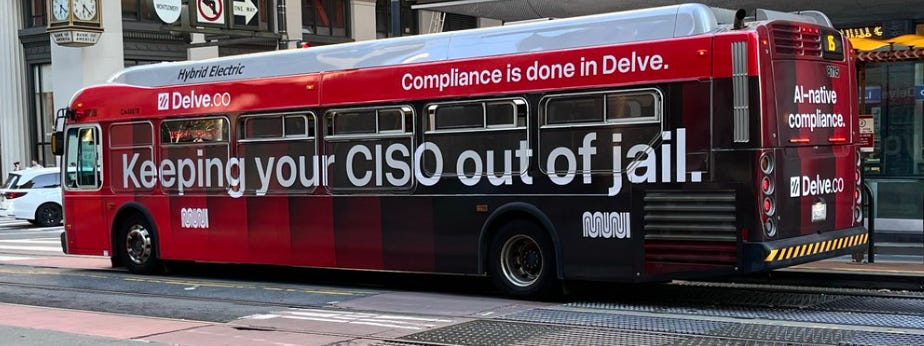

And also has a large city bus campaign currently running in San Francisco with messaging suggesting they are ‘keeping your CISO out of jail’.

Any more irony?

Yes - it turns out they ‘certified’ their own security through their own fraudulent process, and as such had left many open datapoints that have since been pilfered through as this story broke this week (including employee background checks, performance reviews, customer lists who are now by default in violation of HIPPA and SOC2 compliance, as well as product IP if that is any evidence of their intentions).

Did Delve respond, and what is their side of the argument?

Although it’s been suggested that the blog post could be an internal leak or a competitor, Delve responded to the blog post with a denial of responsibility without as much addressing the issues found as blatant fraud, while possibly obfuscated the issue further by manipulating the SEO terms to optimize separate terms that only bots can see in the html of their denial. Any of their blame on AI issues may not work if this issue heads to the US DOJ due to recent AI fraud crackdown initiatives.

What does this exemplify about other AI startups?

Beyond basic compliance that protects consumer privacy, there are three aspects:

The misunderstanding of AI, eg the application of ‘agents’ to do something using NLP pattern recognition against a corpus of training data but without an understanding of compounding error that occurs with increasing complexity.

Second, a misunderstanding of the underlying business that any startup is being applied to. A potential issue here could be the high valuations as if these things are not misunderstood.

High valuations are likely not assigned with due diligence (nor does any annualized revenue have any calculation of churn, but that’s a separate issue I’ve been writing about for over 2 years).

More to come in this space..